Ensuring Quality in AI-Driven Qualitative Research

Human oversight, data controls, and strong moderation logic behind reliable AI qual.

Data quality is one of the first concerns research buyers raise when they evaluate AI-moderated qualitative research. The reason is understandable: decisions depend on the quality of inputs.

Introduction: Why quality is the key question

Respondent attention is fragmented nowadays. We are not only competing with other surveys or interviews - we are competing with everything else on the screen. Respondents are less patient, less focused, and more likely to disengage than they were in traditional research settings.

In AI-moderated research, this concern becomes even sharper: clients cannot physically see respondents in most cases, raising natural questions about bots, uncontrolled participant engagement, insufficient answer depth, and the reliability of automated moderation and research outcomes.

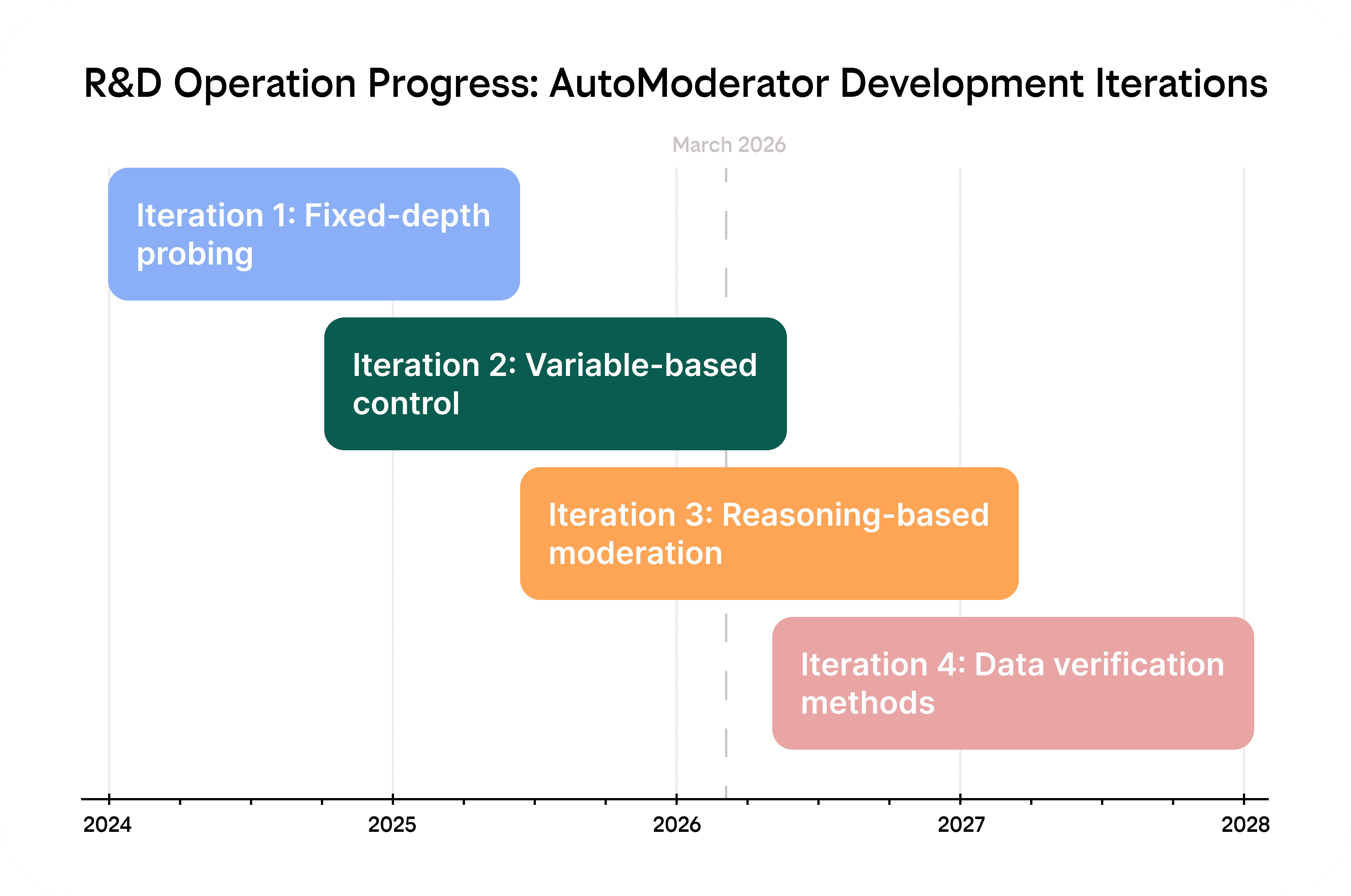

At Yasna, we have been treating quality as a core design problem since our foundation in 2024. Since then, we have been continuously refining both the moderation logic and the quality-control system around it through multiple iterations, project-based testing, live experiments, and expert review.

Our goal has always been to make qualitative research fundamentally more efficient without giving up responsibility for meaning, business relevance, and research quality.

How we define quality

Quality means five things at once: human oversight over critical stages of the process, rigorous respondent quality control, strong moderation quality, methodology that increases relevance rather than just volume, and ongoing platform testing and updates.

Here is a concise description of each of those directions.

Human-in-the-Loop. Human experts remain actively involved at all stages of the process to set direction, validate interpretations, and ensure business relevance.

Data Quality Control. We use automated and manual checks to verify respondent reliability, data completeness, and the overall fitness of the output for decision-making.

Moderation Quality. We define moderation quality as the AI moderator’s ability to probe naturally, engage respondents, stay on task, adapt to responses, and collect rich, relevant, and comparable data.

Iterative Methodology. We use staged research designs where interim findings are reviewed by humans and used to refine the next step of developing ideas and the study design.

Continuous Updates & Testing. We treat quality as an ongoing discipline, with moderation logic improvements, platform testing, and validation continuously informed by real project delivery.

Each of these layers is described in more detail below.

1. The core principle: Human-in-the-Loop

The foundation of our approach is Human-in-the-Loop. We use AI to accelerate the parts of qualitative research that are safe to automate, while keeping human experts actively involved throughout the process, especially at stages where the responsibility for meaning is the highest.

A useful way to understand how responsibilities are split between AI and humans is through four levels: Data, Information, Knowledge, and Decision.

| Levels of knowledge creation | Role of AI | Role of humans |

|---|---|---|

| Data - raw facts and observations without context or meaning. | Conducts interviews, captures responses, cleans data, and prepares the dataset. | Designs the research: defines objectives and tasks, writes the guide, sets quality criteria, and oversees the process. |

| Information - interpreted and organized data that answers basic questions such as who, what, where, and when. | Structures responses, tags and summarizes content, and ensures comparability of results. | Sets the interpretation frame and clarifies what is truly important for the task. |

| Knowledge - a structured understanding of patterns and cause-and-effect relationships that makes it possible to explain phenomena and draw grounded conclusions. | Detects patterns, builds hypotheses, connects signals, and accelerates synthesis. | Validates interpretations, creates meaning, and connects conclusions to business context. |

| Decision - a conscious choice of a course of action based on knowledge, including acceptance of risk and responsibility for business consequences. | Does not make decisions, but highlights options, risks, and trade-offs to support human decision-making. | Makes decisions and takes responsibility for the chosen direction and its business consequences. |

AI plays a stronger role at the data and information levels. It conducts interviews, structures responses, tags and summarizes content, and supports first-pass analysis.

Humans own the higher-order layers: Knowledge and Decision. They validate interpretations, connect findings to business context, create meaning, formulate recommendations, and take responsibility for the final outcome.

However, human involvement is not limited to these final stages. Researchers remain actively embedded throughout the project lifecycle.

2. Data Quality Control

The first layer of quality assurance is data quality. It is crucial because poor traffic quality cannot be fully fixed later. This includes respondent quality, answer quality, and a combination of automated and manual review.

As for samples, we work only with trusted panel providers who already have their own quality-control procedures and reconciliation processes. Partner-side standards reduce risk at the source, while our own controls add an independent layer on top.

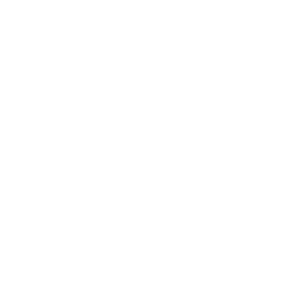

At the technical level, on our platform side we monitor multiple signals associated with suspicious participation. These include:

bot-like behavior,

abnormal typing patterns,

copy-paste behavior instead of natural input,

device and environment anomalies,

and other technical traces.

This respondent was responding too fast and using other AI to answer questions. This interview has been flagged with a bad quality score and will be excluded from analysis.

No single signal is treated as absolute proof. Instead, we use a combination of indicators to estimate risk more reliably. This matters because fraud detection in modern online research is probabilistic by nature and requires a multi-signal approach.

A real participant can still provide weak material. That is why we also evaluate whether answers are sufficiently full, specific, meaningful, and relevant to the research task.

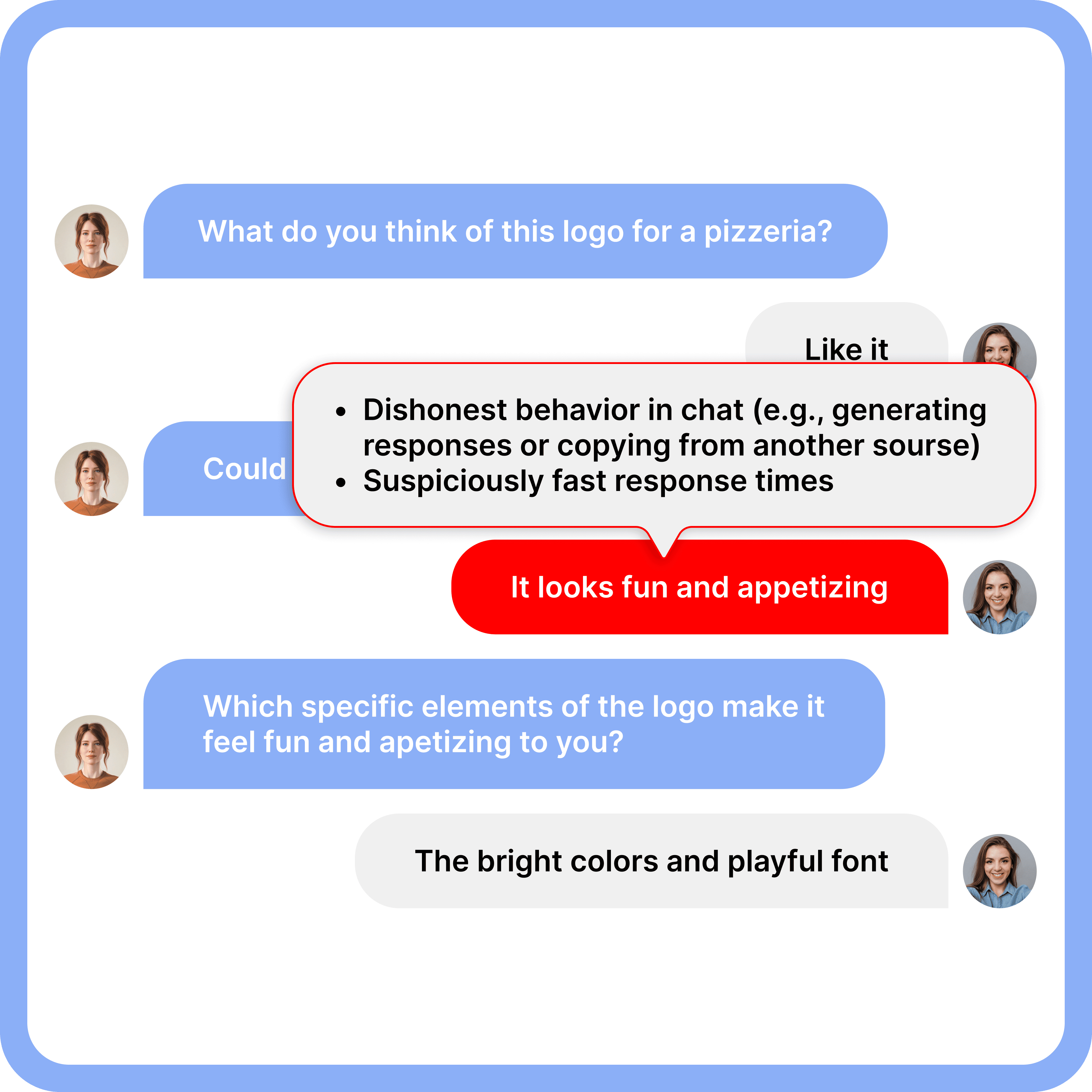

Good response quality. Detailed sufficient answers provided.

Bad response quality. Disengaged respondent providing vague answers. The interview was automatically flagged by the platform and then manually checked by a human expert. It did not qualify for analysis.

Conversational interviewing is, by design, a data collection format that can improve data quality. Compared to surveys, it feels more natural, keeps respondents engaged, and helps them elaborate step by step instead of asking them to produce a fully formed detailed answer in one shot.

3. Moderation Quality

The next layer of quality assurance is moderation quality: how well the interview itself is conducted. Good automated moderation means full topic coverage, adequate probing, a logical flow, sufficient answer depth, and a respondent experience that keeps people engaged rather than exhausted. This matters especially in chat-based qualitative research, where superficial answers are easy to get unless the moderator knows when to go deeper.

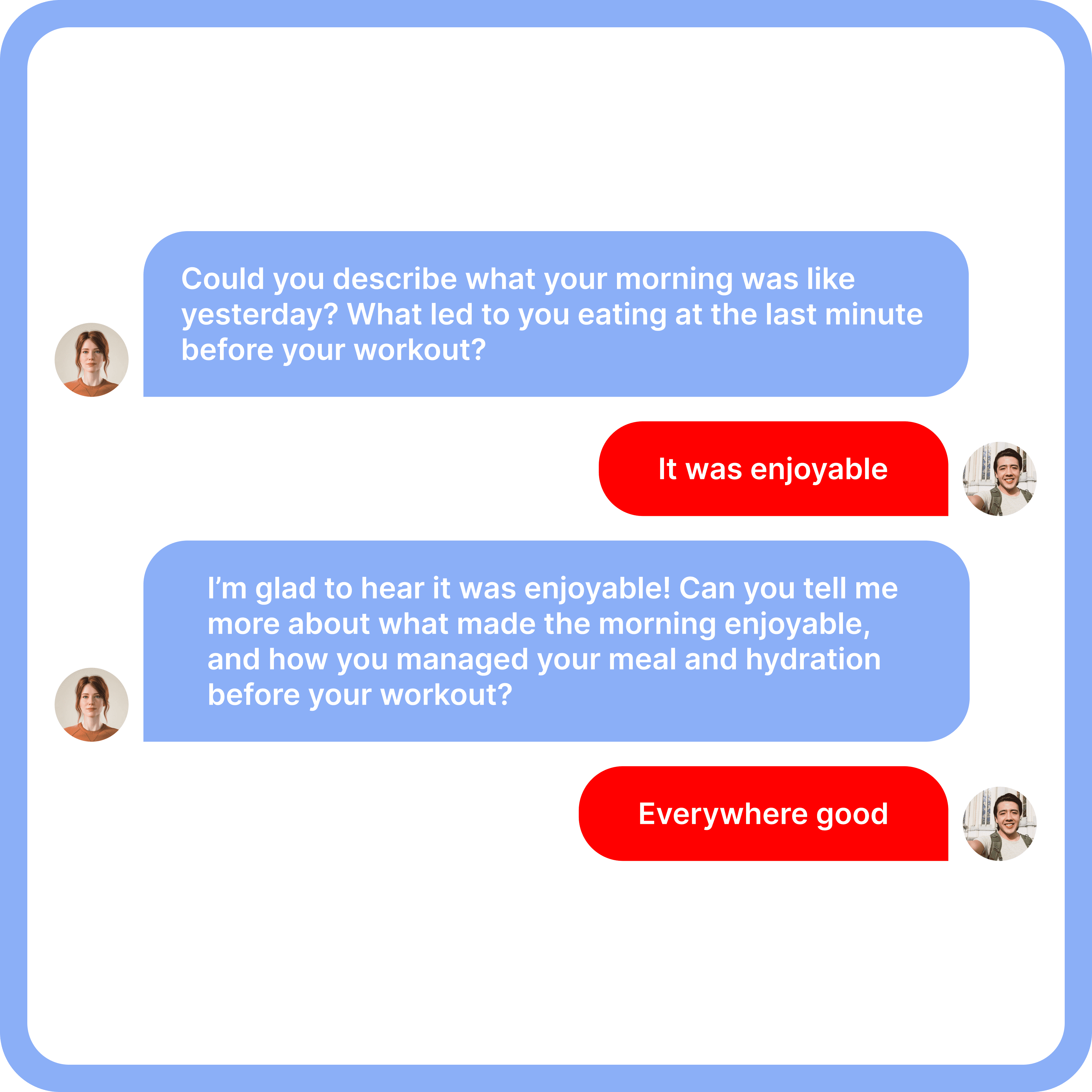

Our moderation system is designed to work more like a solid qualitative researcher than a rigid script. It does not simply ask questions in sequence - it checks whether the necessary information has actually been collected and continues probing when needed.

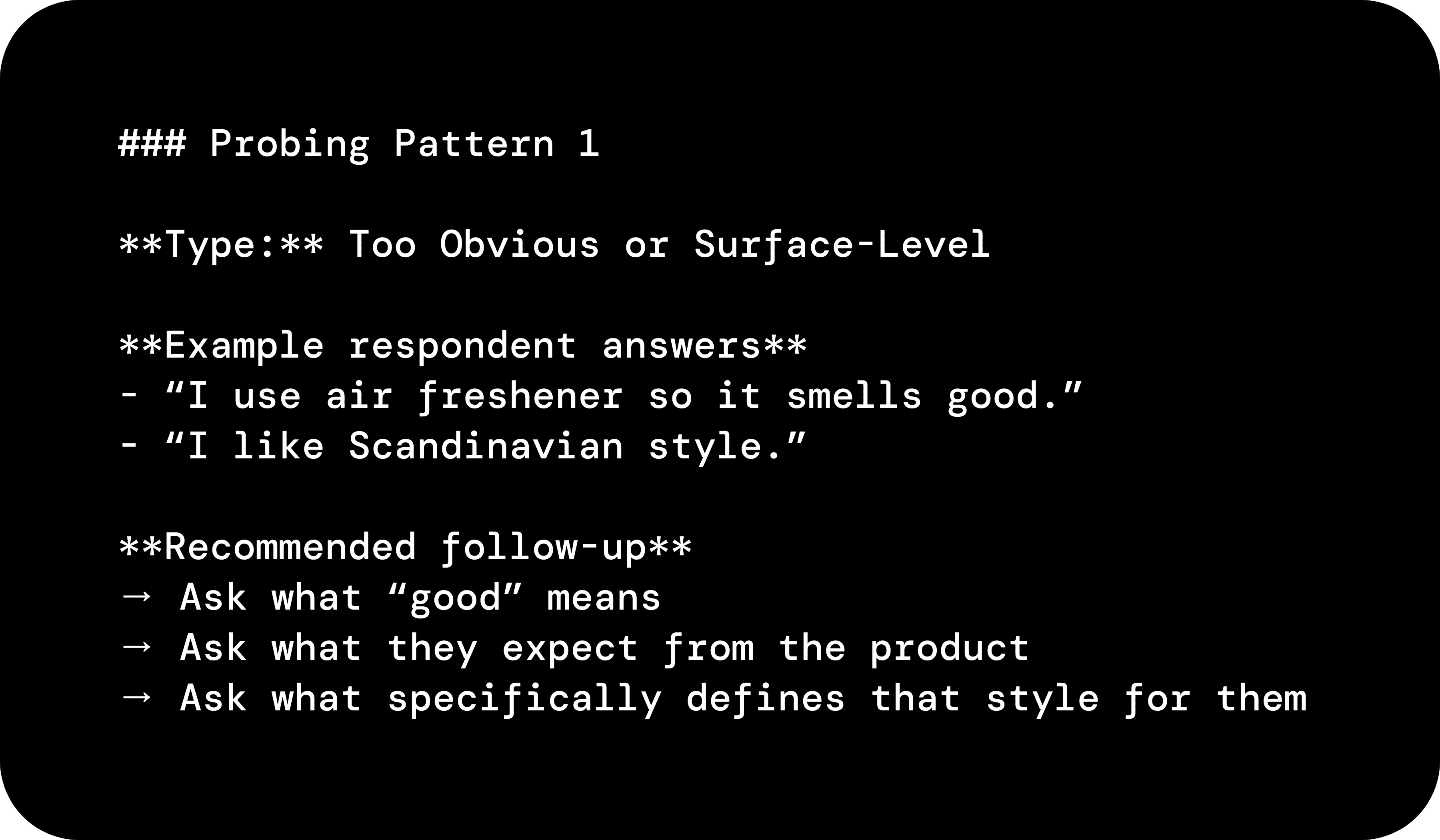

It also uses expert-defined probing patterns developed through repeated testing to identify answers that seem acceptable on the surface but still lack clarity, depth, or specificity.

This is one of the probing patterns used to prompt the AutoModerator.

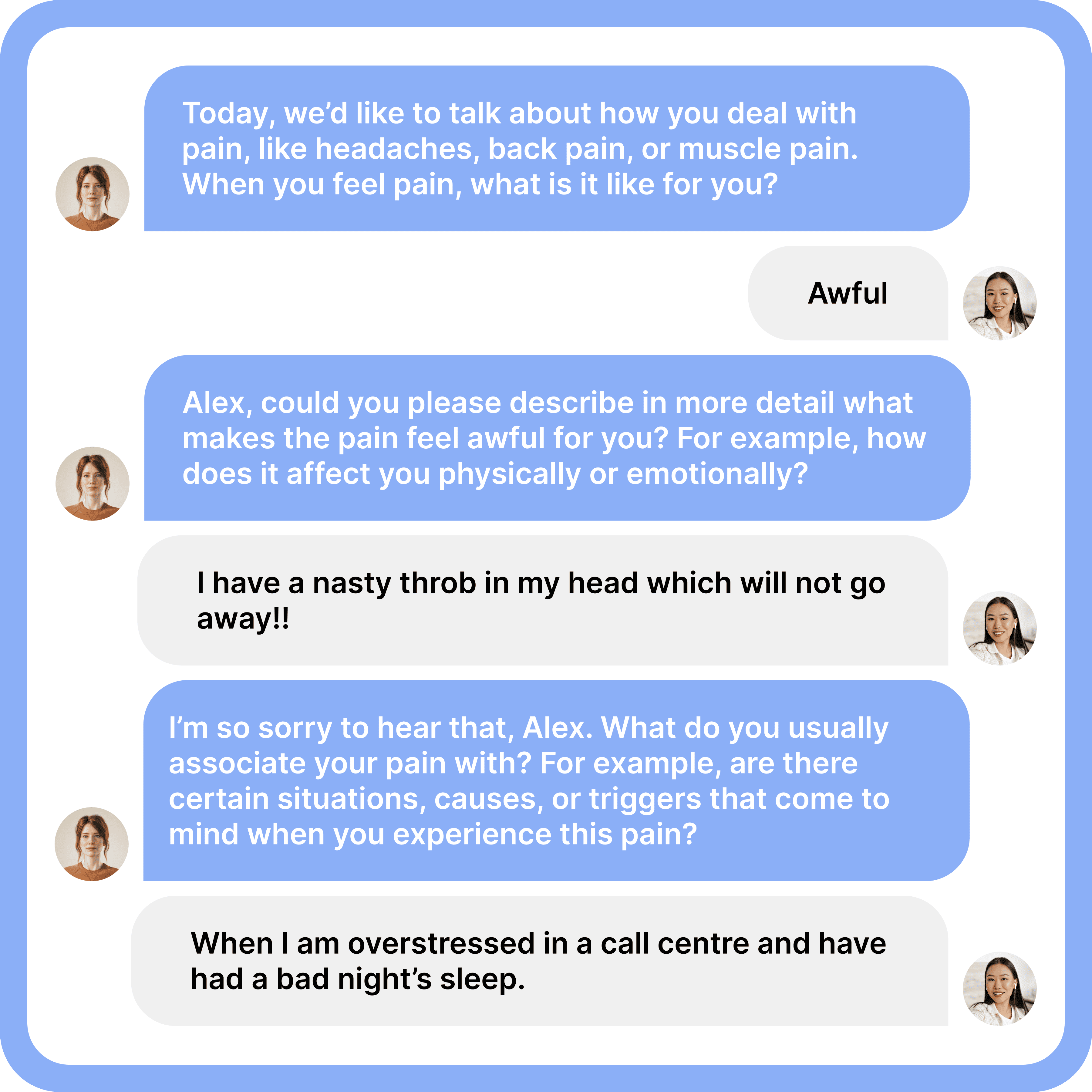

Yasna uses human-like elements that improve cooperation and comfort: addressing respondents by name, adapting wording to what they just said, keeping probes contextual rather than generic, and maintaining a conversational tone. These details matter because they help non-cooperative or tired respondents stay engaged long enough to provide meaningful depth.

Yasna AI agent is turning a vague answer into a specific, sufficient answer using smart probing.

Moderation quality is also secured by a multi-agent architecture of our platform. Different agents contribute to different parts of the moderation task: one focuses on the logic and flow of the interview, another supports probing behavior, another evaluates whether enough useful information has been collected, and others can monitor data-quality signals in parallel. This separation helps us balance completeness, flexibility, and respondent experience. It also creates internal control points inside the moderation process itself.

4. Iterative methodology as a quality mechanism

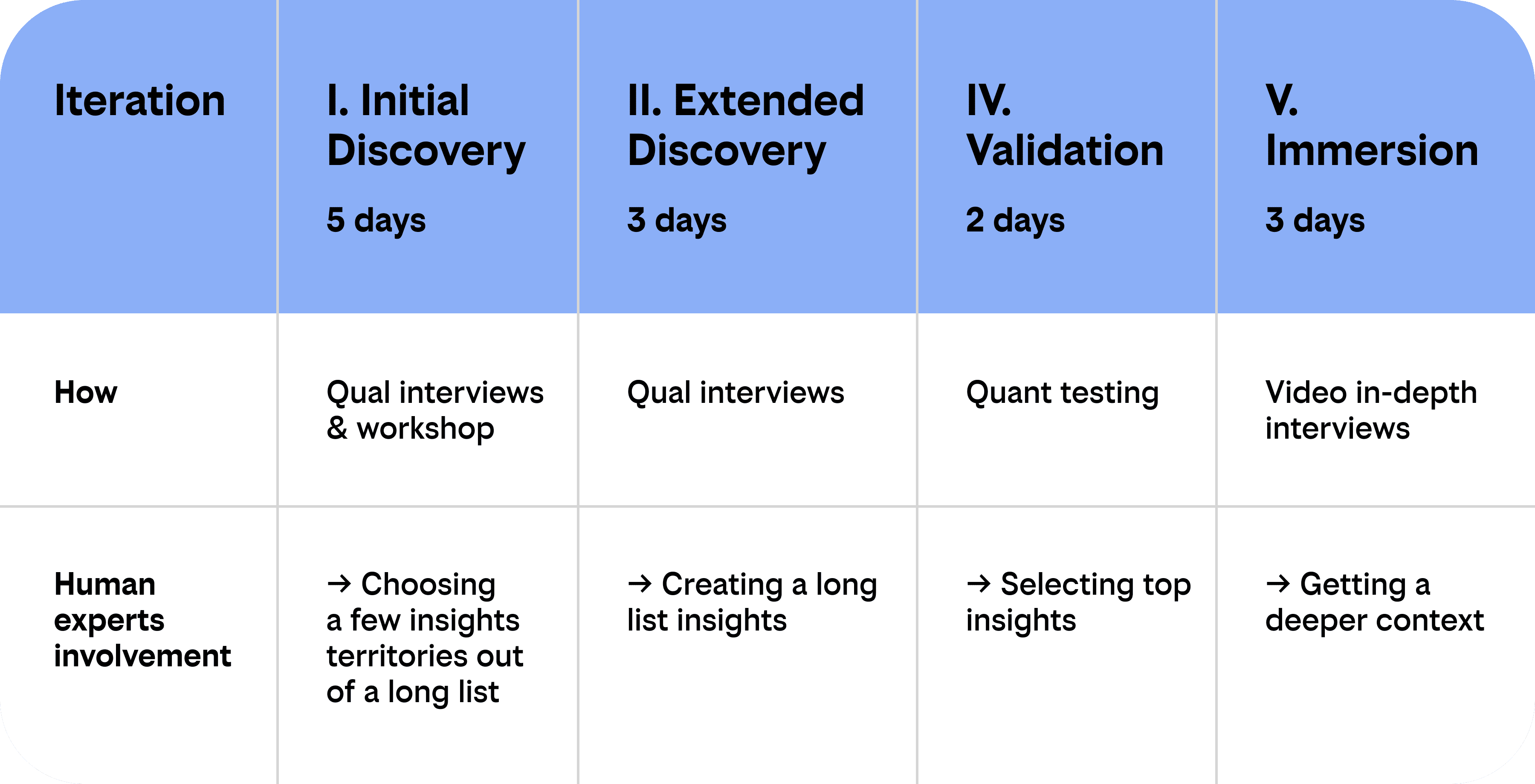

At Yasna, we treat iterative methodology as part of quality assurance. Instead of trying to solve everything in one long linear study, we run research in several focused stages.

This is the logic behind our iterative Discovery process: after each stage, we pause to review the results together with the client and align on what should be adjusted before the next step.

In practice, our Discovery process works as four short, focused research projects. At the beginning, when the goal is to generate innovation opportunities, we explore the territory as broadly as possible. Within a few days, we bring back a wide range of findings and discuss them with the client, jointly identifying the most promising territories for the next stage. We then explore these selected territories in more detail, develop insights together with the client, validate them quantitatively, and add illustrative evidence that helps the business better understand consumers.

This keeps the process controllable and learning cumulative, and shorter, focused sessions tend to produce better respondent engagement than a single 40-60 minute interview.

5. Continuous quality assurance

LLMs and other platform components evolve, therefore at Yasna, moderation and analytical logic are regularly tested after model updates and internal changes. Before changes go live, they are tested by experts in test projects to ensure that new behavior improves what is needed without breaking what already works.

This process does not stop: QA specialists and research experts are constantly monitoring moderation outcomes, and corrections are applied to both automoderation logic and analytical modules.

Larger logic updates happen at the architecture level, and smaller prompt-level and workflow improvements are introduced continuously based on ongoing testing and learning from real project delivery, especially as new edge cases or weaknesses become visible.

Conclusion

AI makes qualitative research fundamentally more efficient. But this does not have to come at the expense of quality. At Yasna, quality is ensured at multiple levels: through human oversight, automated and manual respondent and data-quality controls, robust human-like moderation logic, iterative methodology, and continuous testing.

Human experts remain a core part of the quality system. They are responsible for aligning business and research objectives, designing the right methodology, overseeing the AI agent and platform, and turning outputs into interpretation and meaningful outcomes.

This combination of AI efficiency and human expertise is what makes AI-powered qualitative research affordable and trustworthy so researchers can use it more often, ultimately supporting better decisions and better products.